Table of Contents

Introduction

Terraform Provider

This post talks about the reasoning behind the project terraform-provider-teamcity. We’ll be looking on how to apply pipelines as code for Jetbrains’ TeamCity CI server using Terraform.

Why Pipelines as Code?

Nowadays, Continuous Integration is a common practice for most software development workflows. The idea of having code being managed, tested and deployed from a central location is mainstream enough that most can take it for granted. This practice’s maturity allowed the evolution of Continuous Delivery and Continuous Deployment, which states that a given repository is always kept in a state stable enough to be shipped to production, at any time.

To successfully enable this practice, however, most teams rely upon heavy automation and plumbing, creating pipelines to deliver code from development to production using tools such as CI systems (Jenkins, TeamCity, Gitlab, Travis, etc…) to perform required steps in an automated fashion.

Assembling a pipeline manually for a single project is not a hard task in itself. Issues start to arise whenever you are member of a Platform/Build team that creates pipelines for other teams’ developers, need to expose CI/CD as a service to other users. Microservices are also a big driver for adoption - as organizations need agility to quickly manage lifecycle of deployable services, sometimes from hundreds to thousands. At a certain scale, it starts to feel clumsy to manually configure and maintain these effectively.

Jenkins users have already been reaping several benefits of this technique for quite some time. It blipped on ThoughtWorks’s Radar as “Adopt”.

"Build as code and pipeline as code would have much the same definition. They are an approach to defining your builds and pipelines in diffable, readable files checked into source control that can then be treated just like any software system."

For managed services (TravisCI, AppVeyor, CodeShip, to name a few), it is common to have some file to describe a pipeline to expose its extension points while encapsulating intricacies, creating a powerful abstraction that makes CI/CD very easy to setup and get started.

TeamCity

JetBrains’ TeamCity is a very powerful, user-friendly build server, that just works™. I’ve worked with other build systems in the past, but grew quite fond of TeamCity the longer I used it, for its simplicity and reliability. It has a proprietary version of Pipelines as Code using Kotlin DSL, where you can either export an existing project’s settings to Kotlin format or create everything from scratch. They have a blog series that goes into detail on how to use this feature.

Why not Kotlin?

I’ve long had a desire to configure TeamCity builds via code, but I’ve hit several limitations. First, Kotlin DSL was not yet available, the only option was to use XML-style settings, which would be used to version configurations, but that is not exactly code, right?

Second, I’ve found out that information on the (back then) recently-released Kotlin DSL was basically a page in TeamCity documentation and the blog series mentioned previously. Found it hard to find deeper information, struggled with inability to reuse code for my configurations and lacked a proper development environment. These reasons pushed me away from using Kotlin DSL for what I was trying to achieve.

Terraform

HashiCorp’s Terraform, an infrastructure-as-code tool usually well-known in the community for cloud providers and other systems, provides a powerful, declarative way of defining configurations for several types of upstream systems. Tapping my previous dabblings with it, I remember using Terraform to not just provision and maintain infrastructure, but rather configure platforms such as Datadog, GitHub and RunScope. Managing these platforms required custom providers, plugins developed either by HashiCorp or the community that extended it to many other uses beyond provisioning infrastructure.

By extending Terraform with custom providers, it is possible to maintain any API-enabled system by leveraging its core as a powerful resource-manipulation framework. The benefits of "infrastructure as code" are enabled to any configurable API.

Terraform configurations are expressed in a simple language, HCL, and are imperative in nature, dictating the desired state rather than adopting a procedural style. This allows the full state being captured in code. Other Terraform features were very atractive, such as:

- Codify configuration for multiple systems together

- Easy to validate, effectively turning configurations into a mini-DSL

- Reusability, with versioning, via Terraform Modules

- Zero-friction CI, easy to setup pipelines to build other pipelines

Writing a custom Terraform provider for TeamCity

After deciding that Terraform was the way forward, the challenge was to write a Terraform Provider in Golang, an ecosystem I had no experience with. Custom provider development can be trivial if you have the experience and a Golang client for the API you’re trying to automate. Unfortunately, I had neither 😢.

At first, I tried auto-generating a Golang client for TeamCity’s API by using go-swagger. That resulted in a very unfriendly API, leaking a lot of peculiarities of TeamCity’s API to the provider implementation, resulting in convoluted code that was hard to maintain.

Since there was no other usable open-source Golang client for TeamCity, I had to write one, considering the use cases I had in mind for the provider. You can find the code for this project here. Experiences on that will serve as input for future writings.

Thus, I’ve created a custom provider that uses this client, which is published at https://github.com/cvbarros/terraform-provider-teamcity.

Modelling TeamCity Resources

TeamCity base resources are projects, vcs roots and build configurations, so they were the starting points for the provider implementation. A Terraform configuration for a very simple pipeline, that performs only one build step and had one configuration would then look something like this:

1 |

|

This sample code manages a Project, named “Simple Project”, a Git VCS Root with some basic settings and a Build Configuration that has a simple powershell step invoking a build script place into the repository root folder.

However, let’s examine some interesting aspects covered in this basic example.

Resource Dependencies are handled automatically by Terraform

Notice the teamcity_build_config.pull_request resource references the Project and VCS Root by using ${teamcity_vcs_root_git.project_vcs_root.id} and ${teamcity_project.project.id} interpolated variables? This dependency graph handling is done automatically by Terraform, ensuring resources are managed in the right order. In our case, it’s not possible to create VCS Roots or Build Configurations without having a Project (for VCS Roots you can create them in the _Root project but that’s an exception 😎)

Variables

Using ${var.github_auth_username} and ${var.github_repository} allows these values to be specified via the environment or directly when planning/applying configuration.

Configuration is fully captured in code

You can still rely on TeamCity’s interface to confirm everything is correct. But the code is simple and concise enough to look and understand how your build is behaving.

Terraform’s declarative nature makes it simple to realize what has been configured.

A More Advanced example

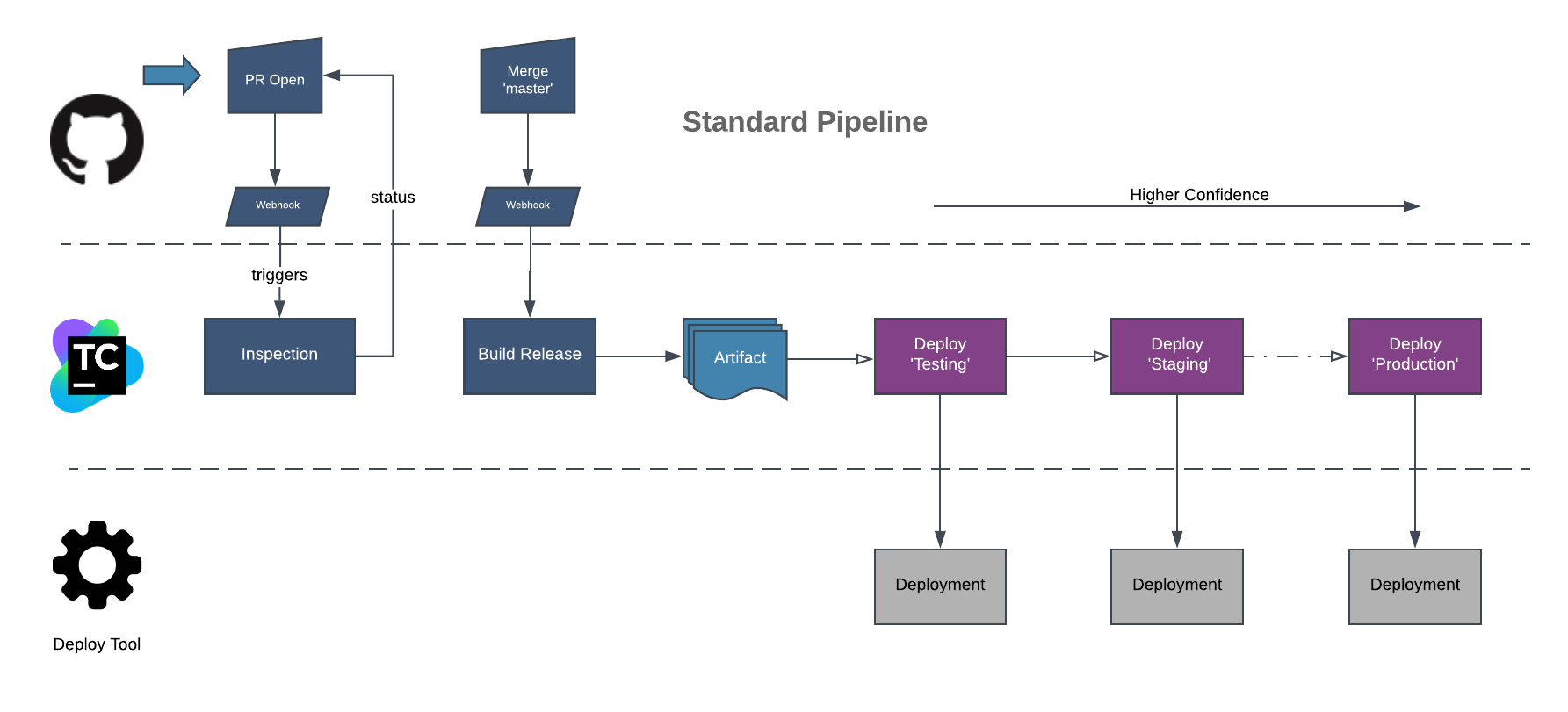

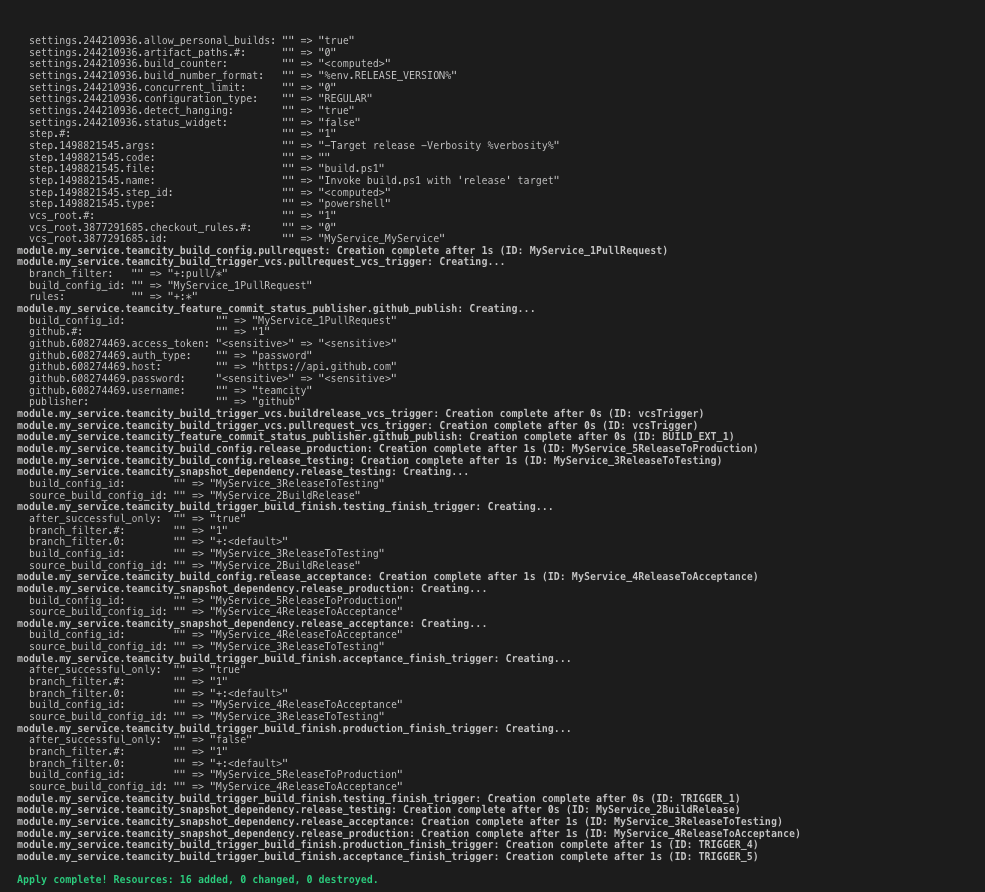

However, we’re talking about pipelines as code. In the real world scenario, we’d have multiple builds chained together, deployment steps and integrations. Things can get messy. The planned MVP for the provider had to cover one of our standardized pipelines for .NET applications, represented by the diagram:

Pipeline Anatomy

Inspection runs basic and fast tests, such as unit tests and linting, and gives a green status if a pull request is eligible for merging. Once merged, changes trigger a Build Release, that performs the same inspection and additional integration tests, producing a deployable artifact.

Further build configurations (in purple) form a deployment pipeline, promoting the artifact across environments once checks are performed, increasing the artifact’s degree of confidence. In each of those stages, the deployed artifact is validated on the environment. In a peculiarity, a requirement demands that only an agent on a given environment is able to perfom checks such as system-wide acceptance tests, so agent requirements will be needed.

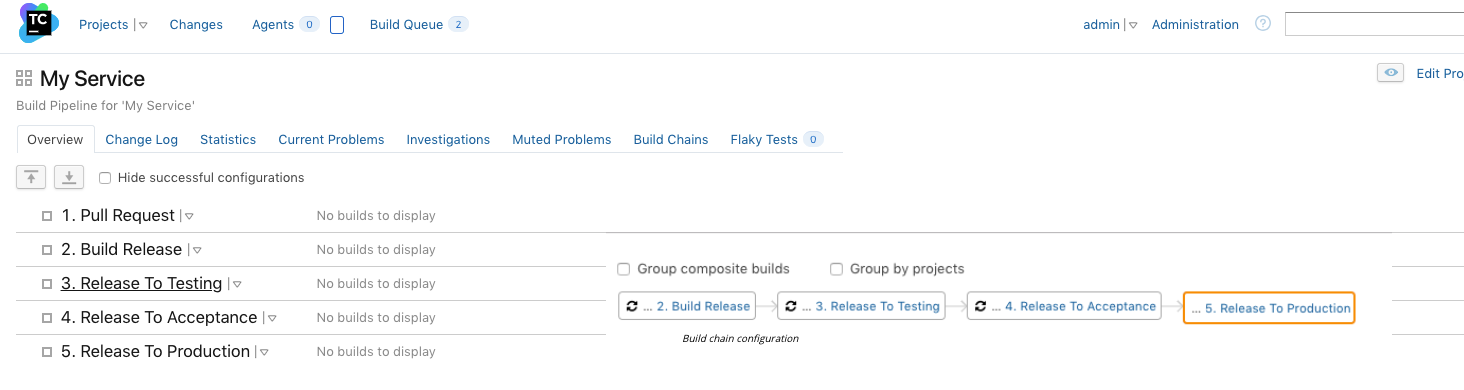

Each stage is represented by a different Build Configuration in TeamCity, chained together with the help of Build Triggers and Dependencies. The final step, Deploy to Production would be manually triggered by an user, in this example. Continuous Deployment is enabled automatically up to the Staging environment.

Full resource graph

Representing fully this workflow in Terraform, would require the following resources:

- Project

- VCS Root

- Build Configuration

- Commit Status Publisher

- Snapshot Dependencies

- Build Trigger - VCS

- Build Trigger - Finish Build

- Agent Requirements

- Several parameters for interacting with Github, Deployment Tool and external dependencies

And the result in TeamCity interface:

Show me the code!

All the code for the previous example can be found on

Conclusion

Having your pipelines defined in code can greatly improve the quality, consistency, reproducibility and maintainability. It creates the right atmosphere for a team to automate the automation. Heck, you can even have the server build its own pipelines that were defined in code. How 😎 is that?

This practice allows a whole different class of improvements such as linting, testing, code generation and reuse, that wouldn’t be possible otherwise.

For next posts we’ll dive into how to compose pipeline features by creating abstractions and how to automate several systems together using Terraform.

The provider project is available in .

Feel free to contribute by opening issues, pull requests and giving feedback!

Happy automating!

Feedback for this article? Use the comments below.

Want to receive updates? RSS

Follow @cvbarros